Dentists go through extensive training and education to become experts in their field. After completing a bachelor's degree, aspiring dentists must attend dental school for four years to earn a Doctor of Dental Medicine (DMD) or Doctor of Dental Surgery (DDS) degree. During this time, they learn about the anatomy of the mouth and teeth, how to diagnose and treat dental conditions, and how to perform procedures such as fillings, root canals, and extractions.

Once dentists complete their education, they must obtain a license to practice dentistry in their state. They may also choose to specialize in a particular area of dentistry, such as orthodontics, periodontics, or endodontics. Each specialization requires additional education and training.

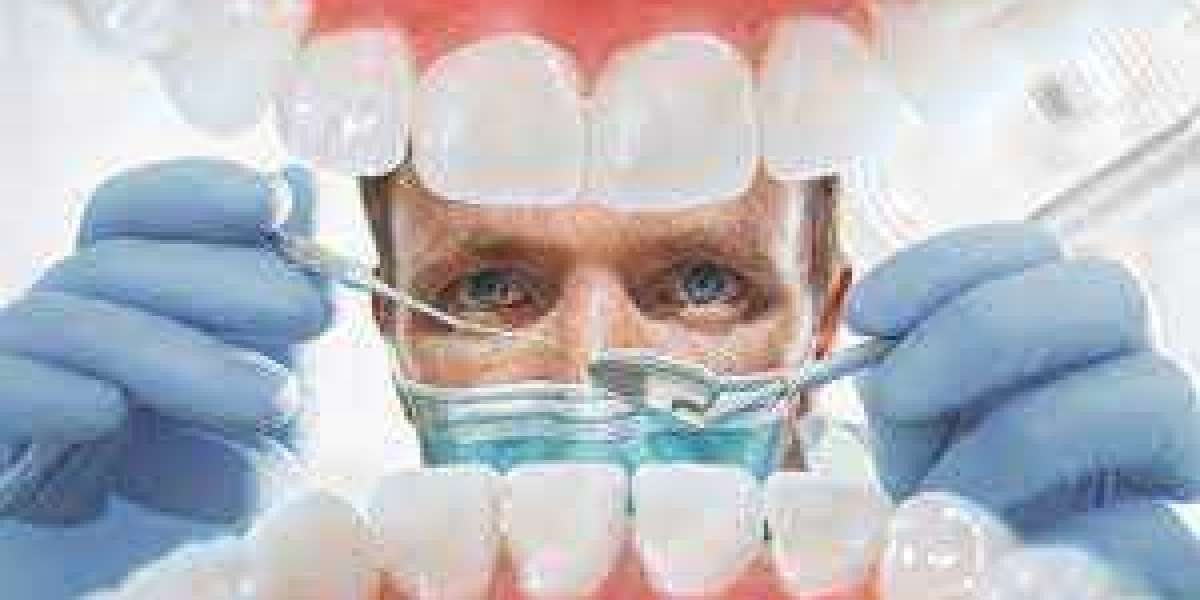

One of the primary responsibilities of Dentists is to help their patients maintain good oral health. They do this by performing routine cleanings and checkups, during which they examine the teeth and gums for signs of decay, disease, or other problems. Dentists may also provide advice and guidance on how to maintain healthy teeth and gums at home, such as by brushing and flossing regularly.

When dental problems do arise, dentists are trained to diagnose and treat them. Common dental procedures include fillings to treat cavities, root canals to treat infections in the root of a tooth, and extractions to remove damaged or decayed teeth. Dentists may also perform cosmetic procedures such as teeth whitening or dental bonding to improve the appearance of their patients' smiles.

In addition to treating individual patients, dentists also play a role in promoting public health. They may work with community organizations to provide education and resources on oral health, or participate in programs that provide dental care to underserved populations.

Overall, dentists are essential healthcare professionals who help people maintain good oral health and prevent dental problems from becoming more serious. They go through extensive education and training to become experts in their field, and their work has a significant impact on the health and well-being of their patients and communities.